Insight and tips on running effective moderated online usability testing

Why we did it

We recently re-visited our collective approach to user research and worked on a new product vision with Thames Valley Housing Association (TVHA). One of the actions that came out of this work was the need to run some usability testing for the repairs service. We came across a number of practical challenges with participant recruitment and planning, which we had encountered before. This included:

- Geographically dispersed users across 14,500 homes across South East of England

- Low take-up and high no-show rates despite a generous incentive

- Lack of walk-in centres apart from main offices in London

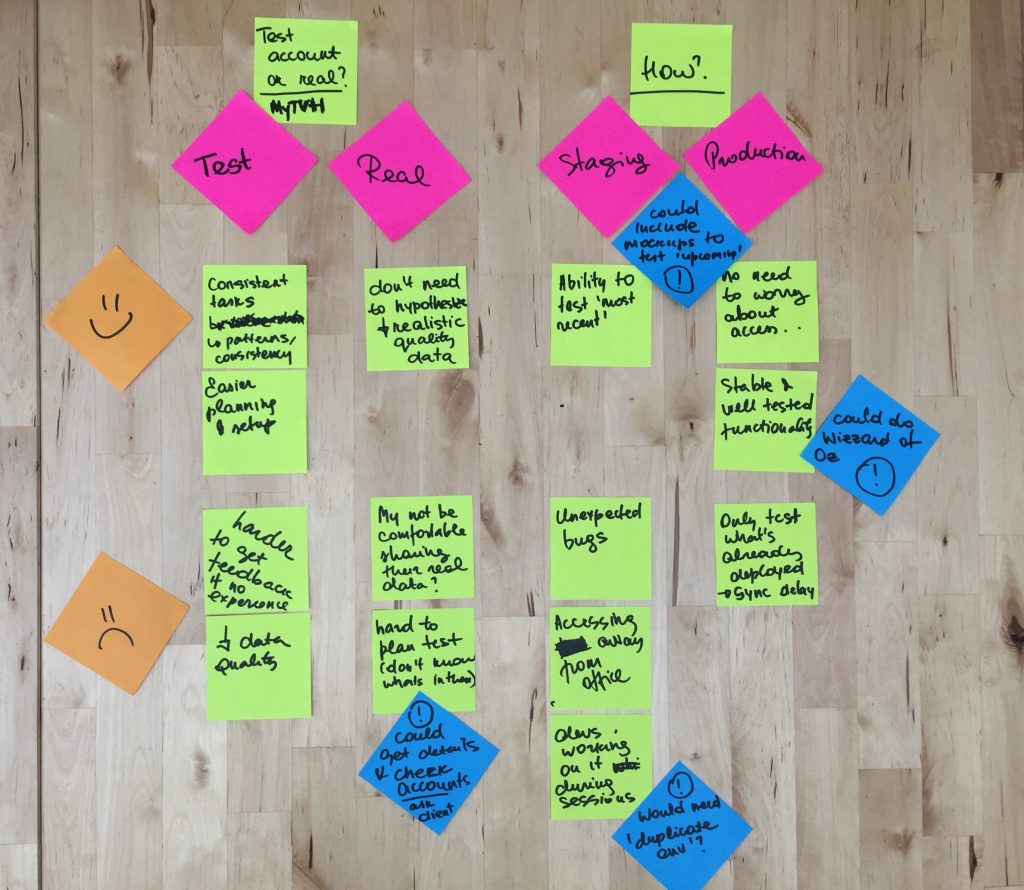

- Technical constraints with service’s staging environment, which meant it can only be accessed from a small number of locations

How we did it

We also considered Lookback and a few others, but most of these great tools didn’t allow us to pass on mouse control and were limited to a specific operating system. We ended up using Gotomeeting, because it was much easier for the participants to join and connect to audio and video. Most importantly, it included a pre-designed and editable test session, which we included in the reminders we sent to participants.We sent out invitations to a pre-screened segment of registered users via YouCanBookMe, with the choice to do it online or in person in TVHA offices in Twickenham.

To conduct usability testing in-person we used a selection of well established tools such as Screenflow, but we needed something else to record the online sessions, allow screen share and mouse control. We originally tried Webex, but quickly realised joining the session was too cumbersome for the participants- the application had to be installed, in some cases required a browser plugin, which didn’t always work even if added. We also found that assigning presenter role and passing mouse control needed quite a bit of explaining.

What we learnt by doing

We already knew some of the shortcomings for not doing research in person- it’s simply harder to run and nonverbal cues are missing- it is not possible to properly read body language apart from limited facial expressions.It is also hard to judge and deal with moments of silence, because it could be a connection issue rather than the participant having trouble with the task or forgetting to think aloud.

Some tips from us for running moderated online testing:

- Automate the recruitment process allowing participants book, update and cancel their time slots

- Do get in touch with the participants before the day to establish trust and confidence in something that is already ‘unknown and unnatural’ to most people

- Select a tool which allows participants to test out the session (and remind them to do this)

- Brainstorm ‘What ifs’ and prepare for plan B- devices, browsers, locations, internet connection, no shows, etc.

- Pilot sessions from various devices and places with someone who isn’t familiar with the tools and ideally is less confident with technology altogether

- Give clear instructions for what to do if participants are having difficulties connecting

- Allow more time to introduce yourself and ‘break the ice’

- Complement online testing with in-person sessions to gain deeper insight

In summary, moderated online testing is very effective if the participants are hard to reach on time and money is tight. Furthermore it enables access to greater variety of users and can provide a more realistic setting of use, because people connect from their own devices and are in their natural environment. However, it is prone to technical difficulties and can’t quite provide the same level of qualitative data as in-person testing due to lack of nonverbal cues. Nevertheless, with a little extra planning and trialling to start with, this type of testing can run smoothly and add great value at low cost.